The (Actually) Scary Parts of Tech in 2026

Large language models have changed the pace of software development in a way that I haven't experienced in my entire career. There are lots and lots of things to think about regarding the current state of tech; deep fakes, energy consumption, the effect on children, job displacement, etc. As a software engineer, I have had so many conversations with people about their sentiments around AI, spanning from extreme optimism to existential dread.

I err on the side of neutrality. Humans are adaptable. We've been through technological revolutions before. But, there are some technological developments that have me feeling more uneasy that I don't think are getting the attention they deserve, and vice versa.

Things That Scare Me

Quantum Computers and the End of Encryption

This has me feeling like what I imagine Y2K felt like. Existential threats to major systems. Doomsday on some calendar date. Uncertainty everywhere.

And, in the end, nothing really happened with Y2K.

But, nothing happened because of enormous efforts from developers all over the world to ensure that systems were robust enough to handle the change.

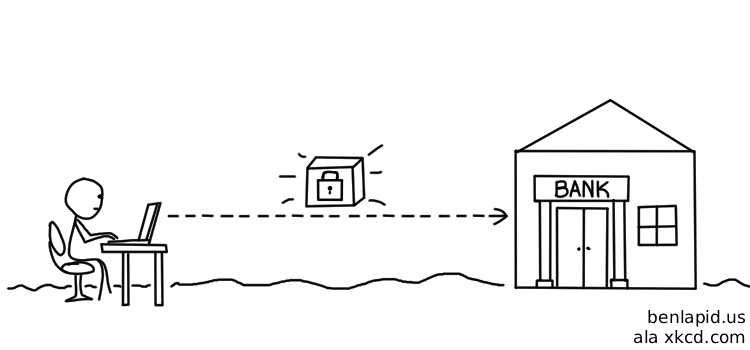

Quantum computing and encryption is a similar-shaped problem. For context: modern digital infrastructure relies on encryption algorithms. Simply put, these algorithms allow data to be passed around securely. You can imagine that data is sitting inside of a locked box, and ONLY the sender and the recipient have a key to that locked box.

In transit, anyone can see that locked box. But in order to crack it open, it would take a modern computer an impractical amount of time to guess the key. We're talking thousands to millions of years on a classical computer, depending on the algorithm. Research from MIT Technology Review and others has explored how quantum computers change this math dramatically.

I can't pretend to know how quantum computers work, but from my limited understanding, unlike classical computers, they can try many keys at once. This brings the challenge of cracking a key from millions of years down to potentially hours.

And this isn't a distant future -- early-stage quantum computers have already demonstrated this in lab settings. Recent research suggests that the gap between theoretical estimates and real machines is closing faster than many expected.

I haven't even gotten to the thing that actually scares me yet.

The reason quantum computing itself doesn't really scare me is because security is a cat and mouse game. As we develop ways around our encryption tooling, we also harden our encryption algorithms. Yes, it'll be a TON of work to migrate systems to quantum-protected encryption. But it'll be necessary, and the alternative -- not doing that work -- means essentially the collapse of software as we know it. So we'll figure it out. We always do.

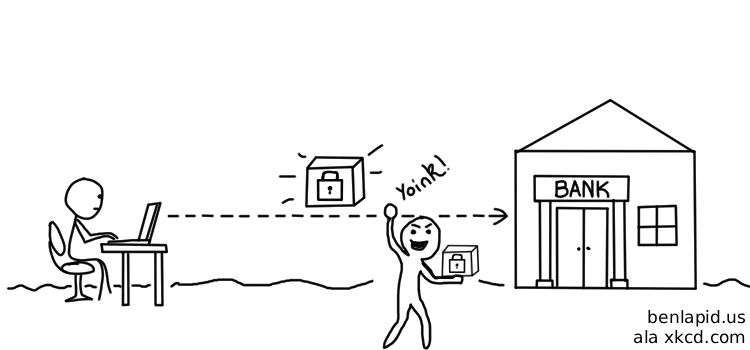

The thing that actually scares me is what could be (and is likely) happening right now. It has a name: Harvest Now, Decrypt Later.

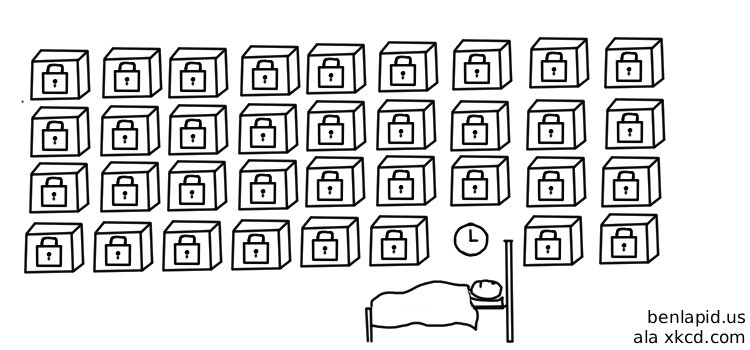

I mentioned that our data is in this locked box in transit. There's NOTHING stopping a bad actor from making a copy of that box. They can copy it without anyone knowing, too. It's just floating through the open internet, currently protected because no one can crack that key. And that's true as of 2026, except in lab settings where it's still expensive and hard.

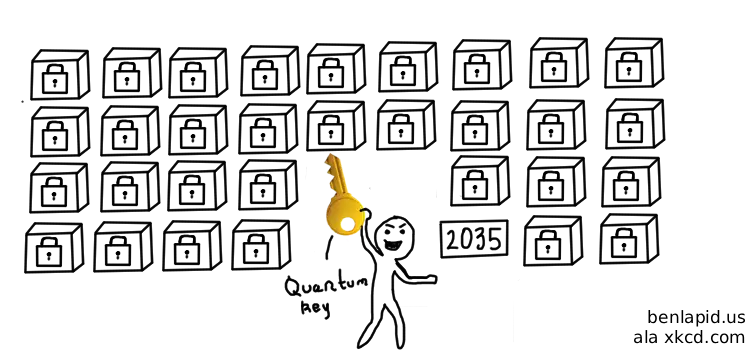

So... a bad actor can just keep that copy and sit and wait for it to be NOT expensive nor hard to break. That's the "harvest now" part. The "decrypt later" part is the quantum computer in 2035 (or sooner) that opens every single box they've been hoarding.

And that's coming up soon. In fact, Google just moved their target date forward to 2029. The NSA is on track to harden their systems with a deadline of 2035.

I don't really see how this doesn't result in some sort of widespread systemic change that I'm not sure we're ready for. I feel more confident that we can make a technical shift to quantum encryption algorithms. To be clear, Google is signalling that as of 2029, they'll be ready for quantum threats. Which implies the existence of quantum threats in 2029.

If these quantum threats are cheap enough to execute, 2029 could very well be the year that the keys to all these boxes are finally computed. This could be medical records, SSNs, your mother's maiden name, etc. -- all the things that we assume are safe because they're encrypted. All things that could be getting intercepted as we speak!

So, sure we are hardening the locks. But the content of the boxes has already been copied, so it's only a matter of time before they're opened.

Claude Mythos

Anthropic announced Mythos, and I think it deserves some attention from folks who might not otherwise be paying close attention to this space.

I realize that many readers of this site are not as entrenched in technology as I am. Mythos is the next AI model from Anthropic (the company behind Claude). I'll try to keep this accessible.

A Quick AsideAgain, I am generally pretty neutral on AI. I use it daily at work. I conceptually don't believe in AI art. I think generally it's a super useful tool that has and will continue to change a ton of industries. I think it's definitely in a financial bubble, but by no means does that mean it's going to go away. I think the bubble popping will just mean that tons of AI wrappers will go under and there will be a few large players left, happy to squeeze more money out of users and enterprises that have become reliant, in order to close the gigantic pricing gap between what folks are currently willing to pay and the cost of actually running these tools.

Anyway -- Mythos might be just hype. But it's also a scary concept that I don't think we're ready for.

These models are better than me at writing code. I don't take that personally. I don't think people are very good at writing code. The problem Mythos (and future models) will introduce is that they're better than people at writing code. And now, more people will have access to tools that are better than humans at writing code.

Allow me to justify my economics degree again.

I think there's a good opportunity to use economics to illustrate how this maps out. The supply of "security knowledge" has shifted outward, which makes the cost of finding exploits go down. So the new equilibrium is: more exploits.

But that's just part of the story. There's inherent asymmetry here. Attackers only need one vulnerability to break a system. Engineers need to harden against ALL attacks, which just takes longer and is more expensive.

According to Anthropic's own report, Mythos has found thousands of zero-day attacks -- software exploits that allow access to things you shouldn't have access to. It found a 27-year-old bug in OpenBSD, a 16-year-old vulnerability in FFmpeg, and autonomously wrote a full remote code execution exploit on FreeBSD that grants root access to unauthenticated users. These are not toy examples.

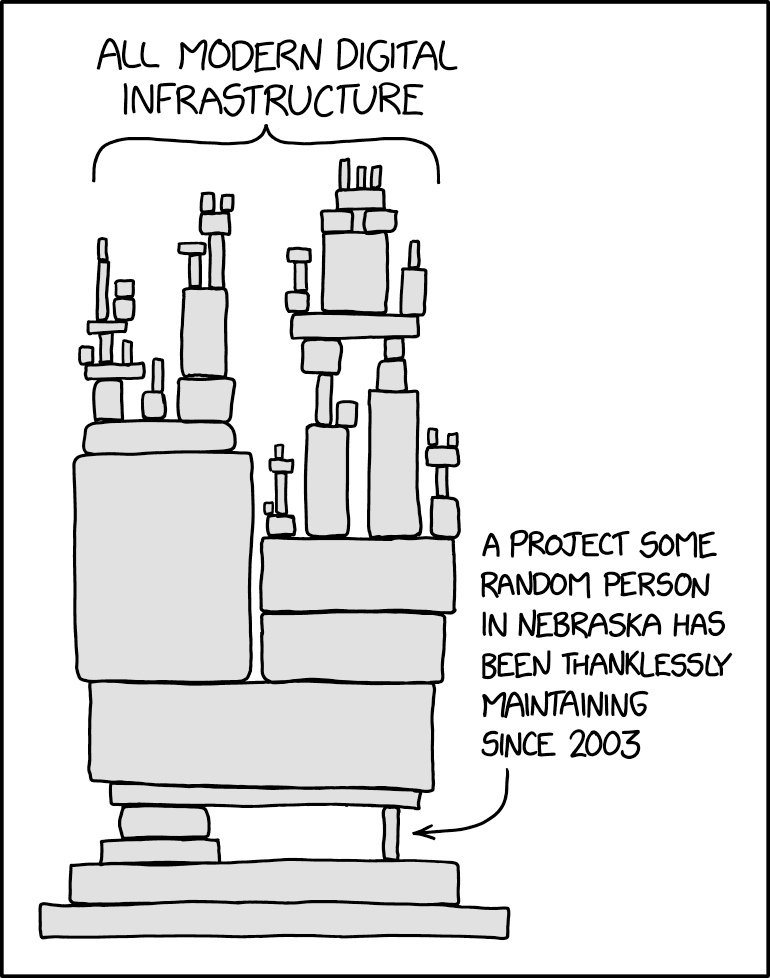

As it stands today, modern software infrastructure is like a tower, where most new software stands on top of the (presumably hardened) software of years past. Mythos is shaking this tower. And due to the asymmetrical cost between attackers and fix-ers, I am concerned that we won't be able to patch faster than the attacks come through.

Again, this is a right-now problem. This model is being used at Apache and Google and others, and some private company is responsible for ensuring that this tower doesn't come crumbling down.

You might be thinking, "But Ben, can't we just use Mythos to find all the bugs in our software?" To that I say: fair point. But, it comes down to this asymmetry. If Mythos can find a vulnerability in an operating system that's notoriously hardened and has been around for decades, what does that mean for the software we're building today?

Take a look at the dependencies of a popular package like axios. Axios was just recently compromised a few weeks ago using a different attack vector.

How do we possibly ensure that all our tools are secure? How can we patch faster than these models can find vulnerabilities? How can the good guys outpace the bad guys when the bad guys only need to find one vulnerability to break a system?

Things That Don't Scare Me

I Will Be Out of Work

I'm not trying to be cocky. I am just constantly being told that I am ABOUT to be replaced by machines, and I just haven't seen anything convincing enough to believe that claim yet.

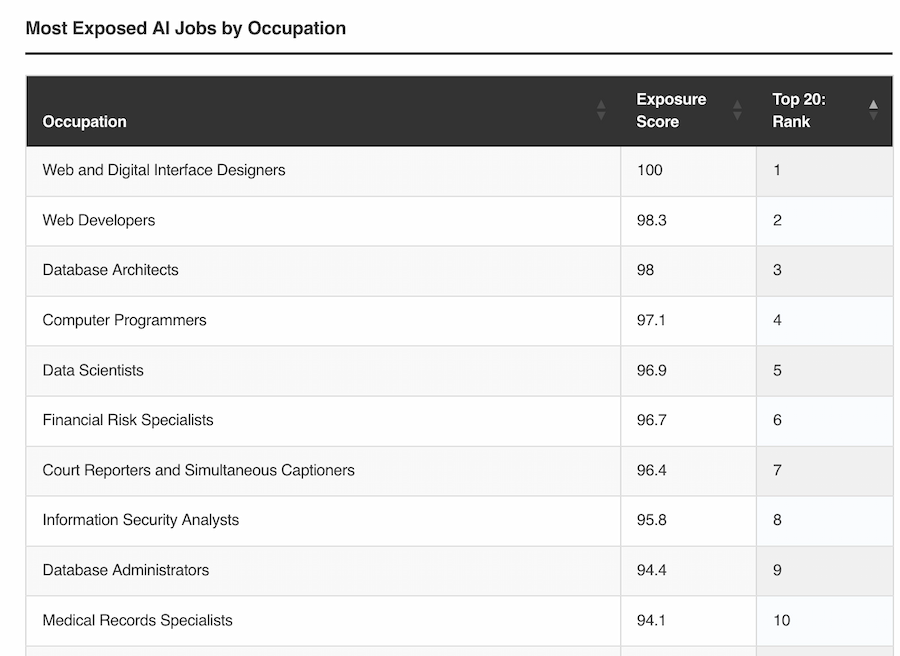

Source: Most Exposed AI Jobs by Occupation

I develop software for the web, and these tools are really good at web development. Maybe at one point, I was spending a lot of time building buttons and writing copy and writing CSS and all the other things you'd expect from that role. Sure -- I think that job is mostly dead. I wouldn't tell a college student to expect to do that after graduation.

I just don't think that's my job in 2026. The value I add is not in the code I'm writing. Ask any senior engineer and they'll tell you that code is a liability. The value I add is from my ability to think into the future, design systems that can scale, squeeze the actual product out of a set of hazy, handwaving goals, and be accountable for that deliverable.

The reason I can't foresee the death of software engineers (at least based on what I've seen so far) is that someone will need to be accountable for the things the computer spits out. Even if someone is shipping projects out of Lovable, they'll need to be responsible for ensuring that tool works 100% of the time.

Are they confident it works at scale? Are they confident it's accessible? Compliant with COPPA? Compliant with SOC 2? Mobile friendly? Works on foldable phones? Won't cause an exponentially growing AWS bill? Ready for internationalization? Hardened against modern attack vectors?

I'd argue if someone is doing all that, they're still engineering. Maybe they aren't called a web developer anymore, but I'll probably be doing that job, whatever we end up calling it. And if a computer ends up doing that and fails somewhere along the way, who is responsible? Who does the CEO fire when Lovable messed up and led to a $100,000 AWS bill?

Until Claude can meaningfully take accountability, I think I'm fine.